Using Holocaust Testimonies as Research Data

Resources for researchers

Martin Wynne reports on a recent workshop, which was a collaboration between CLARIN and the European Holocaust Research Infrastructure.

Oral testimonies of survivors of the Holocaust, recorded in audio and video interviews, are historical records of huge historical importance. Can language technologies help to make them more usable as research data? A workshop was held at King’s College London from the 15th to the 17th May 2023, hosted by the national centre for EHRI in the UK, and co-organized with CLARIN. The event was a first attempt to bring together practitioners associated with these two research infrastructures at the European level, and to plan ongoing collaboration and joint initiatives. The workshop aimed to start new collaborations, as well as building on and learning from existing collaborations at local and national levels, for example in Czechia and Austria where CLARIN and EHRI centres are based in the same units, and in Italy with the Ravensbrück project. The CLARIN centre in Prague has already made a demo of including EHRI materials in an interface for language resources, and written a blog post for the EHRI website here.

Among the participants were historians from Austria, Belgium, Greece, Hungary, Italy, Poland, and the UK, archivists from the United States Holocaust Memorial Museum, the Fortunoff Video Archive for Holocaust Testimonies at Yale University, and the Ghetto Fighters Museum in Israel, as well as linguists, technologists and data scientists from both EHRI and CLARIN, including from Czech, Dutch, Italian and UK CLARIN centres.

The workshop was a first step to share data, tools, know-how and experiences. The USHMM shared a number of testimonies with participants from their extensive collections, which can be explored here.

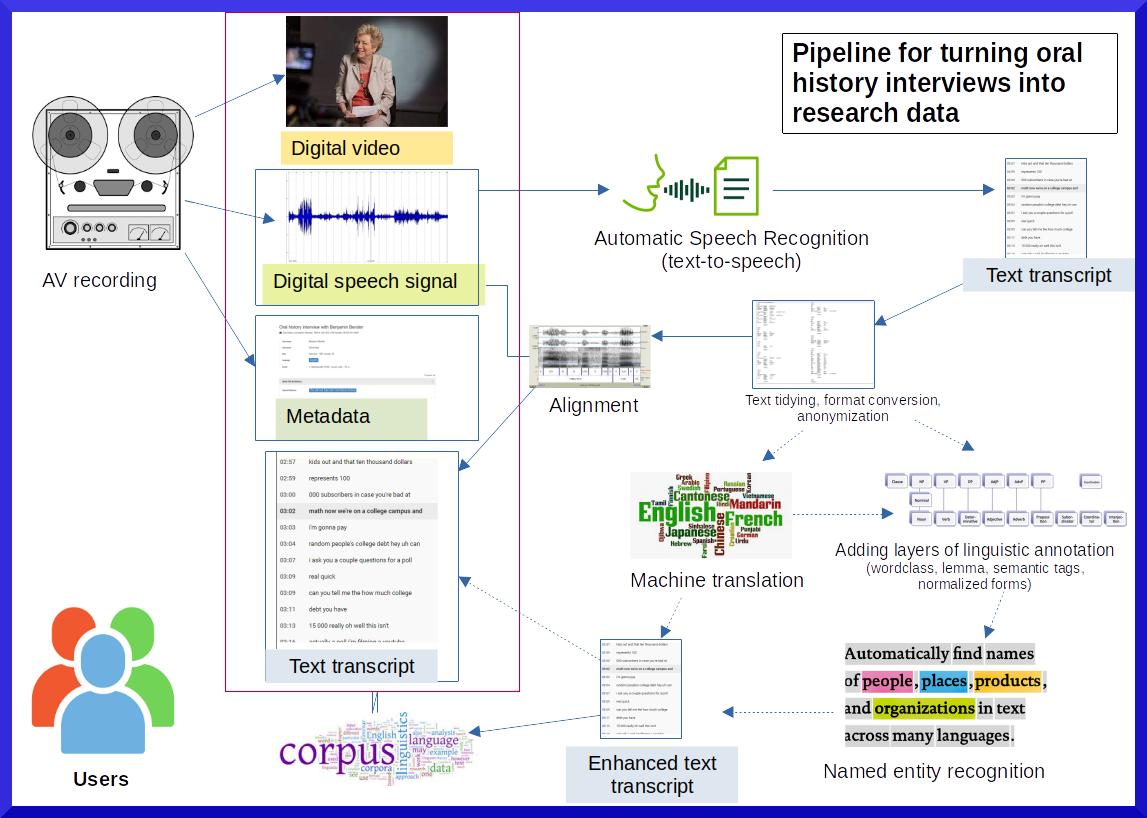

It became clear in the technical discussions and hands-on sessions that there was a rich set of existing tools for processing data at various stages of the pipeline for turning oral history interviews into research data, most of which were known to some participants but not all, and so we started to assemble a list of these resources. This blog post attempts to disseminate these resources to a wider audience. It is expected that there will be a number of outcomes from the workshop, including future workshops, joint projects and further blog posts.

We started with a schematic diagram to help us to identify the different stages in the processing of audio-visual data, especially in relation to the generation and enhancement of text transcripts. This was presented with a recognition that it was incomplete, and certainly didn't recognize all of the relevant aspects and dimensions, but in the hope that it might help to from the discussion.

Schematic framework for turning interviews into research data

Conducting an Oral History Interview

The Oral History Association provides guidance on best practice, and an excellent and comprehensive bibliography:

It was agreed at the workshop that more practical guidance on technical aspects of interviewing would be useful in order to ensure that recordings are optimal for ongoing processing tasks, such as transcription, turn taking, and speaker identification (also known as diarization).

Transcription (ASR)

An important part of the pipeline is the extraction of transcripts from audio interviews. It was acknowledged the rapidly evolving field of AI is making powerful tools for automatic speech recognition (ASR) much more easily accessible to non-specialist researchers and data curators. While this opens up a number of exciting possibilities, the fast pace of change makes it difficult to keep up with the latest state of the art, with frequent updates to existing tools and the frequent, sudden arrival of new players on the scene.

- Whisper from OpenAI can be used online, and also installed locally and run within python programmes (Maria Dermentzi from King's demonstrated its use in a Google CoLab notebook at the workshop). While the latter option can overcome the problems with taking the data to the programme on commercial platforms, workshop participants discover that the installation and use of Whisper within python programmes and notebooks was not necessarily for the faint-hearted, and a reasonably high degree of technical knowledge in a number of areas.

- Some other Whisper resources:

- Developer forums for lots of code examples and add-ons

- Whisper Jax for 70x faster transcription than standard Whisper. Github here

- TheirStory.io uses Pyannote for speaker diarization on top of Whisper (whisper github here): https://github.com/pyannote/pyannote-audio

- Deepgram has a model that uses Whisper and transcribes really fast (5-10 minutes for a 2 hour interview). Deepgram is accessible via API.

- University of West Bohemia used Wav2Vec 2.0 models, will soon be made available via a web service from LINDAT (the CLARIN centre at Charles University, Prague)

- The CLARIN Transcription Portal (requires authorization via single sign-on) will soon be updated with Whisper to perform the underlying ASR

Translation

The translation of transcripts into other languages is vital for a number of use cases, including providing a searching common text layer in a lingua franca (such as English), as well as making transcripts more accessible to different communities.

- The University of West Bohemia team used https://lindat.mff.cuni.cz/services/translation/

- The University of Oxford team used DeepL, but the free and Pro levels of service proved to be quite limited in the number of documents that could be processed. Further investigation of options for use of the API and local installations is planned.

Timecode Indexing

This is a technology for inserting, editing and correcting the alignment of transcripts with the audio stream.

- TheirStory uses https://c2dh.github.io/tim as the base for a markdown-based indexing module (with side-by–side transcript), developed together with University at Luxembourg C2DH and Hyperaudio as a free open source module (MIT licence). You can see the general workflow / blog post on the tool here.

Similarly, transcript-based video technologies are also available, and some pointers to available resources were given:

- Hyperaudio pad (demo, github) – created by Mark Boas and Laurian Gridinoc

- AutoEdit – created by Pietro Passarelli

- Origins of AutoEdit and documentary Paper Editing process

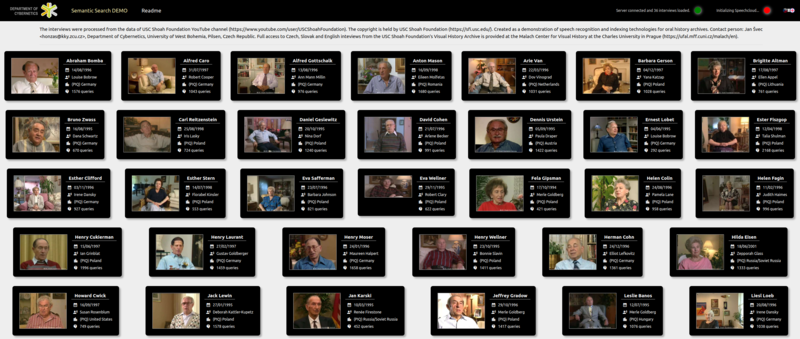

Semantic Search

A powerful addition to search interfaces is to allow the user to search not for specific words or phrases, but for passages with a meaning related to a search phrase. Two examples were demonstrated:

- William Mattingly’s semantic search of USHMM transcripts: http://wjbmattingly.com/flask-annoy/

- University of Western Bohemia demo: https://malach-aq.kky.zcu.cz, using data from USC Shoah Foundation's Visual History Archive, provided at the Malach Center for Visual History at the Charles University in Prague UWB Repo (code examples): https://github.com/kitt10/semantic_search. There is a CLARIN Impact Story about the semantic search from the Czech CLARIN consortium, as demonstrated at the workshop.

Named Entities and Geo-Location

Paul Rayson and Ignatius Ezeani from Lancaster University presented their work on building spatial entity recognition. This work represents an important step in recognizing and categorizing geographic locations in testimonies, enabling people to search for places in more sophisticated ways, as well as allowing location-based visualizations and queries.

- Project website: https://spacetimenarratives.github.io/

- Ruled based regex methods: https://github.com/SpaceTimeNarratives/demo/blob/main/spatial_narrative_demo1.ipynb

- Regex + spaCy NER + semantic tagging: https://github.com/SpaceTimeNarratives/demo/blob/main/spatial_narrative_demo2.ipynb

- spaCy entity ruler: https://github.com/SpaceTimeNarratives/demo/blob/main/spatial_narrative_demo3.ipynb

- Demo app (old version): https://spacetimenarratives.streamlit.app/

The EHRI controlled vocabularies were also noted as an important resource which could be combined, for example, with search, annotation and visualization services:

- EHRI Vocabularies: https://portal.ehri-project.eu/vocabularies

A handy tool for qualitative coding was noted:

- Taguette: https://www.taguette.org/

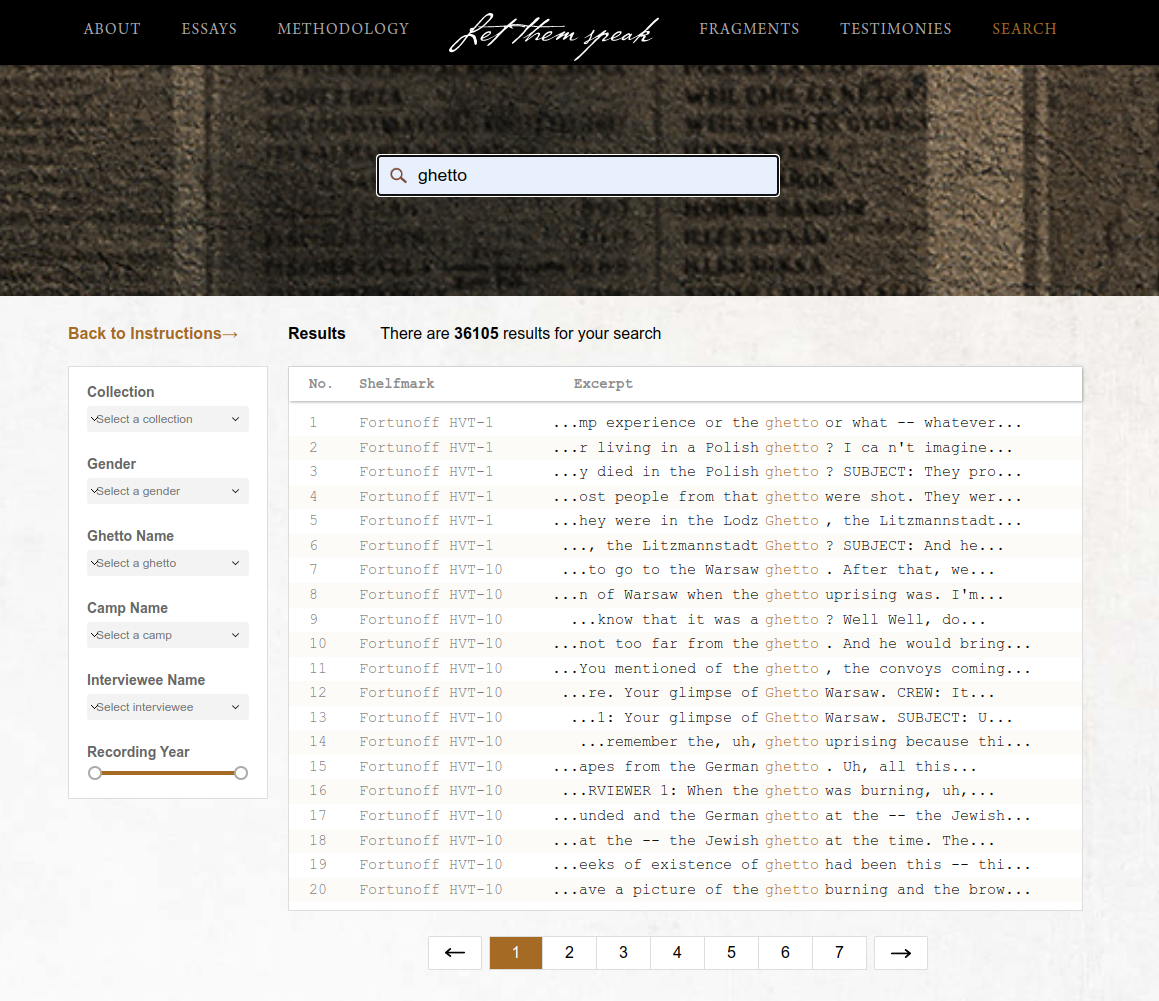

Corpus interfaces

Interfaces for searching and exploring transcripts that have been aggregated to form a corpus. A fully working online example of this approach can be seen here:

- Let Them Speak: https://lts.fortunoff.library.yale.edu/ (and more information at https://dhlab.yale.edu/projects/let-them-speak/)

Martin Wynne and Caitlin Wilson presented an experiment to put parallel, aligned transcripts and their translations searchable here:

- CQPweb at Oxford: http://cqpweb.ling-phil.ox.ac.uk/CQPweb/ (login credentials available on request from martin.wynne at ling-phil.ox.ac.uk)

Further development of corpus resources shows great potential, but there a number of issues to address in terms of text availability, format conversion, annotation, translation, alignment, etc, as well as connecting back to the audio and video interviews, and enabling interoperability with other tools, such as semantic search and searching by geographical locations. There is also the important question of the long-term sustainability of corpora made available via online interfaces, which CLARIN is attempting to address in a number of ways.

A final word

This is just the start of what looks like it will be a long-term ongoing collaboration. For CLARIN it brings the opportunity to fulfil its mission of supporting the use of advanced language tools and technologies across the social sciences and humanities, in an area of clear and direct benefit to society, in improving access to these vitally important historical records. For EHRI, we hope that the benefit will be that together we can contribute to improving the quality, quantity, sustainability, interoperability and accessibility of this oral testimonies, enabling them to be made available in a wider range of scenarios. The importance of oral testimonies is portrayed in this recent video:

https://www.youtube.com/embed/UlhK7ejRx5IMany thanks to all participants in the workshop who provided input to the above listings. The summarization and comments are mostly mine and I take full responsibility for all errors. Please bear in mind that these listings are a snapshot in May 2023 of a fast-moving field.